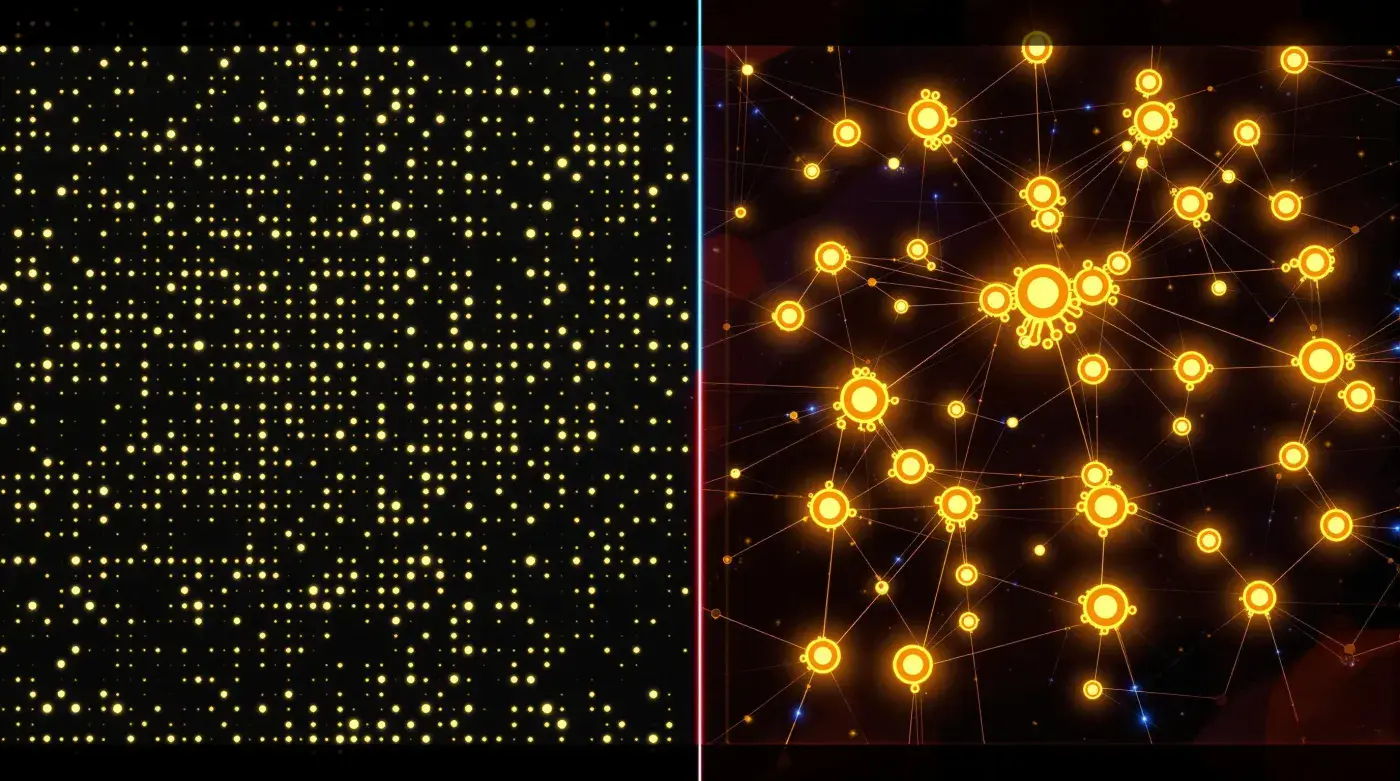

When it comes to large language models (LLMs), not all architectures are built the same. Imagine two contrasting approaches to organizing a large enterprise: In one, every employee is trained to handle any task that comes their way. In the other, specialists focus on their areas of expertise, with an efficient system routing work to the right expert. These two philosophies mirror the fundamental difference between dense models and experts-based models in AI.

Dense models, like a versatile workforce where everyone participates in every project, engage all their resources for every task. In contrast, experts-based models operate more like a specialized team where only relevant experts are called upon for specific challenges. These architectural choices have far-reaching implications for scalability, efficiency, and practical applications.

In this article, we'll explore these two approaches in depth, examining how they work, why they exist, and where each excels. We'll also look at their historical context through early, now-abandoned models that laid the groundwork for today's architectures, and peek into the future with emerging hybrid models that aim to combine the best of both worlds.

---

The Dense Model: The Classic Heavyweight

Advances in computational power and energy-efficient hardware have made dense models increasingly viable, even at massive scales. Like modern cities developing better infrastructure to support growing populations, specialized AI accelerators such as GPUs and TPUs have enabled faster processing for dense architectures. Meanwhile, developments in low-power chips are helping reduce energy costs, pointing to a future where dense models become more accessible for a broader range of applications.

Dense models like GPT-3 and GPT-4 represent the quintessential LLMs. Think of them as massive libraries where every book is instantly accessible for every query. They're called "dense" because, like a library that keeps all its resources available at all times, every parameter in the model participates in generating every output, regardless of the task's complexity.

How Dense Models Work

Dense models are trained on massive datasets, where they learn patterns in language, relationships between words, and contextual nuances. The key characteristics include:

Uniform Parameter Utilization:

- Every parameter activates for each task, like a full orchestra playing every piece

- The entire model processes each input, similar to a council where everyone votes

- Full computational power deployed for every query, regardless of complexity

Generalized Knowledge:

- Develops broad understanding across all domains, like a renaissance scholar

- Handles diverse tasks without specific tuning, similar to a versatile production line

- Maintains consistent performance across different types of queries

High Computational Cost:

- Significant processing power required for operation

- Resource-intensive training and deployment

- Substantial memory requirements for each task

Why Dense Models Exist

Dense models prioritize generality and simplicity in architecture. They excel in scenarios where:

Universal Application:

- A single model handles diverse tasks effectively

- Consistent performance is crucial across different domains

- Broad knowledge integration is necessary

Resource Availability:

- High computational power is readily accessible

- Energy efficiency is not a primary constraint

- Robust infrastructure supports intensive processing

Performance Priority:

- State-of-the-art results are the primary goal

- Versatility takes precedence over specialization

- Consistent quality across all outputs is essential

Examples of Dense Models

GPT-3 and GPT-4 (OpenAI):

- Industry leaders in versatile text generation

- Demonstrate remarkable reasoning and problem-solving capabilities

- Handle diverse tasks from creative writing to technical analysis

- Set benchmarks for general-purpose AI performance

BERT (Google):

- Pioneered bidirectional understanding in transformers

- Excels in language comprehension tasks

- Widely used for natural language understanding

- Influenced many subsequent model architectures

XLNet:

- Advanced autoregressive model combining BERT and GPT approaches

- Sophisticated handling of contextual predictions

- Innovative approach to sequence learning

- Enhanced performance on various language tasks

Tasks Where Dense Models Shine

Creative and General Content:

- Writing and content generation across genres

- Complex narrative development

- Nuanced language understanding

- Sophisticated text completion and enhancement

Cross-Domain Applications:

- Multi-step reasoning across different fields

- Integration of diverse knowledge domains

- Complex problem-solving requiring broad knowledge

- Versatile task handling without specialization

Technical Capabilities:

- Code generation across programming languages

- General debugging and problem analysis

- System design and architecture suggestions

- Broad technical documentation generation

---

Experts-Based Models: The Specialized Task Force

Experts-based models represent a fundamentally different approach to AI architecture. Like a modern corporation that maintains specialized departments—marketing, engineering, finance—these models activate only the most relevant components for each task. This selective activation, similar to how a film studio assembles specific teams for different productions, allows for efficient resource allocation and specialized expertise.

Advances in hardware optimization and energy efficiency have positioned experts-based models uniquely for future scaling. Just as modern manufacturing uses automated systems to route work to specialized production lines, these models use sophisticated mechanisms to direct inputs to the right expert components. This efficiency in resource utilization allows them to scale to unprecedented sizes without the energy costs typically associated with large models.

How Experts-Based Models Work

Experts-based models introduce mechanisms to dynamically select which parts of the model should be active for a given query. Key features include:

Sparse Parameter Utilization:

- Only relevant experts activate for each task

- Dynamic selection based on input characteristics

- Efficient resource allocation through specialization

Specialized Knowledge:

- Experts focus on specific domains or tasks

- Deep capability development in targeted areas

- Efficient handling of domain-specific queries

Scaling Efficiency:

- Reduced computational requirements through selective activation

- Better resource utilization through specialization

- Ability to scale to larger total parameter counts

Why Experts-Based Models Exist

Experts-based models address key challenges in scaling LLMs:

Resource Optimization:

- Reduced computational costs for operation

- Efficient energy usage through selective activation

- Better resource allocation for specific tasks

Domain Specialization:

- Superior performance on specialized tasks

- Efficient handling of domain-specific queries

- Deep expertise development in targeted areas

Scalability Benefits:

- Ability to grow beyond dense model limitations

- Efficient resource use enables larger models

- Better handling of specialized workloads

Examples of Experts-Based Models

Switch Transformer (Google):

- Pioneering mixture of experts architecture

- Efficient parameter utilization through routing

- Scalable design for large-scale deployment

- Demonstrated benefits of sparse activation

GShard (Google):

- Advanced distributed computing implementation

- Efficient scaling across multiple systems

- Sophisticated routing mechanisms

- Optimized resource utilization

Tasks Where Experts-Based Models Excel

Specialized Processing:

- Technical documentation and analysis

- Domain-specific problem solving

- Specialized code generation and review

- Complex technical queries

Resource-Constrained Applications:

- Mobile and edge computing deployment

- Efficient large-scale operations

- Distributed system implementation

- Power-efficient processing

Domain-Specific Tasks:

- Legal and medical analysis

- Technical documentation

- Specialized research support

- Industry-specific applications

---

Early Architectures: The Evolution of AI

The journey to today's sophisticated models mirrors the evolution of many industries. Just as manufacturing evolved from craftsmen's workshops to assembly lines to modern smart factories, AI architectures have undergone significant transformations:

n-Gram Models: The First Tools

- Simple pattern matching, like early mechanical calculators

- Limited to basic operations, similar to single-purpose machines

- Valuable for their time but ultimately too limited for modern needs

- Historical importance in laying foundational concepts

Recurrent Neural Networks (RNNs): The First Assembly Lines

- Sequential processing, like early production lines

- Maintained basic memory of previous steps, similar to batch processing

- Limited by their linear nature, like single-track assembly systems

- Contributed important concepts about information flow and memory

Seq2Seq Models: The Early Automation

- Introduced systematic information processing, like automated manufacturing

- Began handling complex sequences, similar to multi-stage production

- Limited by sequential bottlenecks, like linear production lines

- Paved the way for modern parallel processing

---

Key Differences: A Side-by-Side Comparison

| Feature | Dense Models | Experts-Based Models | Real-World Parallel |

|---|---|---|---|

| Parameter Utilization | All parameters active | Only relevant specialists activated | Full factory vs. Specialized production lines |

| Resource Efficiency | High computational cost | Efficient resource use | Mass production vs. Just-in-time manufacturing |

| Scalability | Limited by hardware resources | Scales through specialization | Monolithic facility vs. Modular expansion |

| Task Handling | Versatile but resource-intensive | Specialized and efficient | General assembly vs. Specialized production |

| Learning Approach | Broad, generalized learning | Focused expertise development | Universal training vs. Specialized certification |

| Response Time | Consistent but potentially slower | Variable, based on routing | Assembly line vs. Custom workshop |

| Examples | GPT-3, GPT-4, BERT | Switch Transformer, GShard | Department store vs. Specialty boutiques |

The Rise of Hybrid Models: The Future of AI Architecture

Modern industry often combines mass production with specialized manufacturing, and AI is following a similar path. Hybrid models represent an innovative approach that blends the strengths of both dense and experts-based architectures:

Integrated Processing:

- Combines general capabilities with specialized expertise

- Balances resource efficiency with versatility

- Adapts processing approach based on task requirements

- Optimizes performance across diverse applications

Future Developments:

- Advanced routing systems for optimal task distribution

- Improved integration between general and specialized processing

- Enhanced resource management for optimal efficiency

- Sophisticated task analysis for processing decisions

As AI continues to evolve, these architectural innovations will shape how we build and deploy increasingly capable systems. Just as modern industry benefits from both mass production and specialized manufacturing, the future of AI likely lies in thoughtfully combining different approaches to create more efficient and capable systems.