When a company says "AI-first," are users hearing "faster better product," or "the people who made this useful are being removed"?

That distinction is the whole Duolingo lesson.

As of February 13, 2026, Duolingo is one of the clearest public case studies in how AI strategy can be simultaneously correct at the systems level and fragile at the trust level. The company showed real leverage in content throughput and operational scale. It also triggered significant backlash when parts of the narrative sounded like replacement rather than augmentation.

If you only read this story as "AI failed," you miss the operational upside. If you only read it as "users overreacted," you miss the trust mechanics that determine durable product economics.

This matters for any team shipping AI into customer-facing workflows. In this essay, you will get a practical model for separating three layers that people keep collapsing into one headline: productivity architecture, public narrative, and business volatility. Once those layers are separated, better decisions become obvious.

This is an operating analysis, not investment advice.

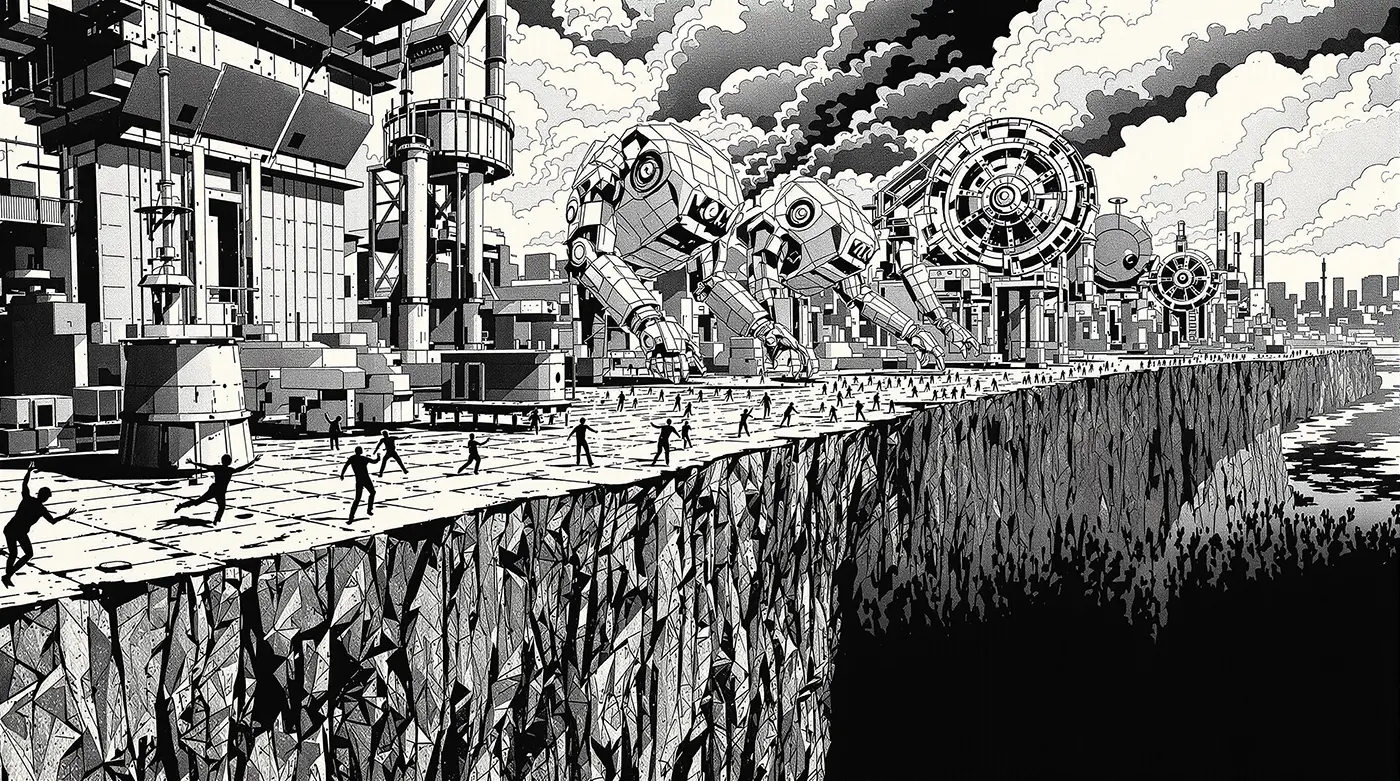

It is also a warning about sequencing. When automation capability improves faster than trust signaling, leadership tends to read internal wins as external wins. That is where execution confidence becomes brittle. Users and markets are not reacting to your internal architecture diagram. They are reacting to the experience contract they believe you are offering.

Key idea / thesis: AI strategy succeeds when automation increases user value while preserving visible human accountability at quality boundaries.

Why it matters now: Many companies are adopting AI-first language faster than they are designing AI-first trust systems.

Who should care: Product leaders, founders, operators, and teams responsible for customer-facing AI transitions.

Bottom line / takeaway: Use AI aggressively for throughput, but never outsource trust architecture to slogans about replacement.

| Strategic layer | Weak framing | Durable framing |

|---|---|---|

| Automation posture | "Replace humans wherever possible" | "Automate repetitive work, preserve human judgment where trust risk concentrates" |

| Customer narrative | Internal efficiency story | User-value and quality-accountability story |

| Operating metric | Output velocity only | Velocity plus quality stability plus trust retention |

Why the public narrative became distorted

Duolingo's public story got compressed into a single emotional meme. That is predictable in social media cycles, but dangerous for operators trying to learn from the case.

Several distinct moves were treated as one event: pipeline optimization choices, high-visibility marketing theater, and explicit language about AI-first operating direction. Each of those has different goals and different risk surfaces.

When teams bundle them into a single "AI replacement" narrative, diagnosis quality drops. The organization then reacts to sentiment rather than root cause.

A better analysis separates timeline from interpretation. What changed in the operating system? What changed in user perception? What changed in market expectations? Those answers are correlated but not identical.

The fastest way to mislearn this case is to collapse execution mechanics and narrative reaction into one causal claim.

The operational upside was real

There is a reason many product teams studied Duolingo's AI posture closely. The company did what many teams only talked about. It treated AI as infrastructure for scaling content operations, not as a novelty feature.

For language learning platforms, content breadth and adaptation speed are core constraints. If creation and iteration loops are too slow, product quality eventually plateaus. AI can materially improve this by accelerating draft generation, variant production, localization support, and first-pass quality triage.

That is not hype. It is workflow leverage.

Now we can name what worked, because this part should be copied:

- AI applied to repetitive transformation and expansion work.

- Faster iteration loops in parts of the content pipeline.

- Clear willingness to redesign internal workflows around new tooling.

Teams that ignore this upside are not being principled. They are usually deferring a redesign they will have to do anyway.

Where replacement framing created fragility

The downside did not come from AI usage alone. It came from trust interpretation when users and creators perceived a shift from "AI helps us serve you" to "people are optional now."

At this point, language matters as much as tooling. In consumer products, brand trust is a production asset. When users feel a product is becoming less human in the wrong places, they stop evaluating feature velocity and start evaluating intent.

That perception shift can happen even when internal productivity genuinely improves. This is why "we are more efficient now" is often a weak external message. Customers do not buy internal efficiency. They buy better outcomes and confidence that quality is still owned.

The practical implication is simple. Any AI-first transition needs explicit trust architecture, not just automation architecture.

A three-layer model for AI transitions

If your team is trying to avoid this failure mode, use this operating split.

Layer 1: productivity architecture

This layer asks whether AI reduces cycle time, lowers repetitive workload, and improves iteration economics in target workflows.

Layer 2: quality accountability architecture

This layer asks who owns correctness at high-risk boundaries, how regressions are detected, and how release gates adapt when automation volume rises.

Layer 3: trust communication architecture

This layer asks what users believe is changing, whether language implies abandonment of human responsibility, and whether the product still signals care at moments that matter.

If Layer 1 advances while Layers 2 and 3 lag, backlash risk rises quickly even when internal metrics look strong.

| Transition question | If answered poorly | If answered well |

|---|---|---|

| "Where can AI run without damaging trust?" | Teams automate by convenience | Teams automate by risk-bounded workflow mapping |

| "Who owns quality when AI output scales?" | Responsibility diffuses | Ownership and escalation paths are explicit |

| "How do we communicate change?" | Efficiency-first messaging | User-value and accountability-first messaging |

What leaders should do differently

Most AI transition plans are overbuilt on tooling and underbuilt on governance rhythm.

A durable plan should include:

- A workflow map that distinguishes low-consequence automation from trust-sensitive interactions.

- A release policy that ties automation scope to quality evidence and rollback readiness.

- A communication model that explains how human accountability remains visible where users care most.

This sounds basic. It is also where most teams fail under pressure.

Why market reaction is not a single-variable verdict

Public market movement is noisy. Equity repricing can reflect guidance changes, macro risk appetite, multiple expansion and compression cycles, execution concerns, and narrative shifts all at once.

So treating one stock move as final proof of "AI replacement failure" is weak analysis. But ignoring customer trust effects because "markets are noisy" is also weak analysis.

Here's what this means: you should use market reaction as a signal to investigate operating assumptions, not as a simplistic referendum on whether AI is good or bad.

The deeper operating question is repeatability. Can your organization keep shipping high-volume improvements while preserving confidence in quality and intent over multiple quarters? If the answer is unclear, short-term productivity gains may still be real, but they will not compound into durable brand and product advantage.

Practical red flags in your own org

If your company is on an AI-first path, these red flags deserve immediate review:

- Teams celebrating throughput gains while customer confidence signals drift down.

- Messaging that emphasizes headcount displacement more than outcome quality.

- No explicit owner for high-consequence quality decisions after automation rollout.

- Release cadence increasing while incident learning quality decreases.

When these patterns appear together, trust debt usually compounds faster than leadership notices.

Speed without accountability is not transformation. It is deferred failure.

Common objections

"If we avoid replacement language, we will move too slowly"

Clear trust framing does not require slower execution. It requires better boundary design and explicit ownership.

"Users do not care who or what made the content"

Users often care when quality feels less reliable, less contextual, or less human at sensitive moments. They may not articulate this technically, but behavior will reflect it.

"This is just social media noise"

Sometimes it is. But repeated trust signals across channels usually indicate a real product-perception shift worth investigating.

Next move

If you are leading an AI transition this quarter, run a 30-day trust and execution audit:

- Identify where automation currently adds value and where it adds trust risk.

- Define explicit human accountability points for high-consequence output classes.

- Rewrite external messaging to emphasize user outcomes, quality controls, and ownership clarity.

- Add one recurring review that scores velocity, quality drift, and trust signals together.

If you only track velocity, you will overestimate progress. If you track velocity plus trust architecture, you can scale without losing the brand value that made the product durable.

Bottom line

The Duolingo case is not a simple anti-AI story. It is a case study in execution sequencing.

AI can and should redesign repetitive content operations at scale. But full replacement framing, without visible quality accountability and trust-aware communication, creates avoidable downside.

The winning model is not AI versus humans. It is AI for leverage, humans for accountable judgment, and systems that make that contract clear to users.